Over the past few months, I’ve become a more avid follower and reader of essays published on Substack. A recent essay by Brendan McCord, You Are Not a Function, argues something that feels both obvious and increasingly neglected: higher education institutions were not designed to produce workers; they were designed to form a foundation for intellectual development.

That distinction between formation and function is not new. According to McCord, it traces back to Wilhelm von Humboldt, a Prussian diplomat and the founding visionary of the modern research university. But as McCord observes, while the architecture of that vision still stands, its animating purpose has largely been hollowed out. Credentialism has overtaken formation.

What makes the essay timely is not simply its critique of universities. It is the context in which that critique is delivered: the rise of artificial intelligence. McCord’s premise is that AI doesn’t just change what we know. It changes how we think, and over time, whether we think at all.

The Quiet Dependency Problem

One of the most compelling observations in the essay is that many people who use AI today are drawing on intellectual habits formed before AI became ubiquitous. They know when to trust a result and when to challenge it because they learned how to read carefully, argue rigorously, and sustain attention without technological scaffolding.

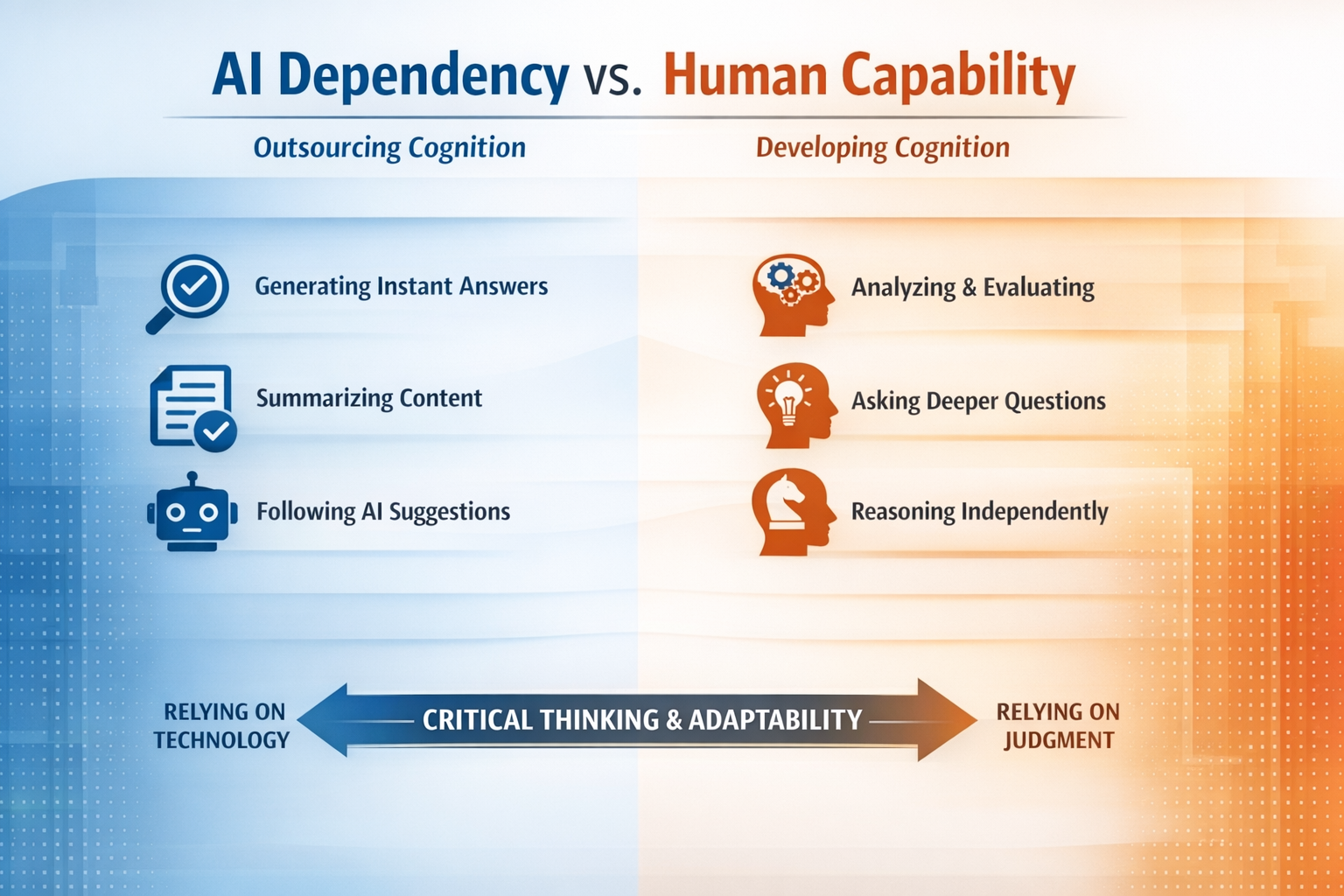

That point deserves more emphasis than the article gives it. We are now entering a period in which those habits may no longer be formed at scale. If that happens, the consequence is not simply weaker graduates. It is a subtle but profound shift in human capability:

- from judgment to prompting

- from understanding to summarization

- from reasoning to selection among outputs

The danger is that students will never develop the cognitive independence required to use AI well. That is where the article’s critique of career-oriented education becomes more than philosophical; it becomes structural.

The False Tradeoff Between Utility and Formation

McCord is right to push back against the growing chorus, from technologists and investors alike, that higher education should focus narrowly on employable skills. In a recent article, I argued for the merits of a liberal arts degree in the era of AI. Others often frame the argument incorrectly as a binary choice between preparing students for careers or developing them as whole human beings.

The historical insight behind Humboldt’s Bildung, as used to develop the University of Berlin, is that these are not competing goals. A broad intellectual formation produces better professionals who are more adaptable, more resilient, and better able to operate in uncertain environments.

The issue is that modern institutions have redefined “career preparation” in overly narrow terms, such as:

- mastering current tools instead of underlying concepts

- optimizing for first job placement instead of long-term adaptability

- rewarding correctness instead of intellectual rigor

In an AI-mediated world, those choices become liabilities. Why? Because the half-life of technical skills is shrinking, while the value of judgment is rising. I used ChatGPT to generate the infographic below illustrating the differences between outsourcing cognition and developing it.

AI as a Force Multiplier, For Better or Worse

Far from eliminating the need for critical thinking, Artificial Intelligence amplifies the consequences of having or lacking it. A student with strong analytical habits uses AI to:

- test hypotheses

- explore alternative framings

- accelerate iteration

A student without those habits uses AI to:

- generate answers that they cannot evaluate

- accept outputs they do not fully understand

- outsource thinking entirely

The same tool produces radically different outcomes depending on the user’s intellectual formation. This is the central point McCord emphasizes that higher education has not yet fully absorbed. We cannot simply integrate a new technology into the curriculum without redefining the baseline assumptions about cognition itself.

What Educators Should Do Differently

If we take the argument seriously, the response cannot be cosmetic, merely adding AI modules or updating course content. It requires rethinking how we design learning environments.

Three shifts seem particularly important.

1. Design for Cognitive Resistance, Not Efficiency

McCord emphasizes that formation occurs through “encounter with what resists us,” namely, difficult texts, complex problems, and sustained efforts. AI removes the friction students encounter. A good curriculum must reintroduce it intentionally. That means including:

- assignments that cannot be completed through simple prompting

- problems that require iteration, failure, and revision

- evaluation based on reasoning process, not just final output

In other words, we must preserve the conditions under which thinking develops.

2. Separate “AI-Assisted Work” from “AI-Free Work”

I suspect that most institutions are drifting toward an unexamined middle ground on which the inclusion of AI in courses broadly without redefining expectations is encouraged. That is a mistake. To build the same educational foundation as many of us using AI today, students will need:

- AI-free environments to build foundational reasoning skills

- AI-integrated environments to learn how to use tools responsibly

Without that separation, we risk short-circuiting the very capabilities the technology claims to enhance.

3. Assess Judgment, Not Just Knowledge

Traditional assessment models reward recall, procedural accuracy, and compliance with instructions. AI can now outperform students on all three. What AI cannot replicate is judgment:

- knowing when an answer is wrong

- recognizing ambiguity

- framing better questions

Curriculum should shift toward:

- oral defenses

- iterative projects

- open-ended problem framing

If we continue to assess what machines do well, we will train students to become moderators of the output from AI. Our future college graduates will know when an answer is wrong, will recognize ambiguity, and will reframe questions for better AI-generated output.

The Institutional Constraint Few Want to Address

There is a deeper tension underlying all of this, one that McCord’s essay hints at but does not fully explore. Higher education institutions are not insulated environments. They are shaped by incentives:

- enrollment pressures

- student satisfaction metrics

- job placement statistics

- cost structures tied to scale

Those incentives push toward efficiency, standardization, and measurable outcomes, all of which favor function over formation. Institutions tend to optimize for what their economic structures reward, even when that diverges from their stated mission.

What should educators do? is too simple a question for this moment. We need to be asking, What are institutions structurally able to sustain over time? That is a governance issue as much as a pedagogical one.

A Closing Thought for Presidents, Provosts, and Trustees

McCord’s essay asks a deceptively simple question:

What is a student, and how should education serve them?

In the age of AI, that question becomes operational. If higher education continues down its current path of optimizing for short-term employability while outsourcing cognition to machines, it risks producing graduates who are less capable of independent thought than the generations before them.

And if that happens at scale, the implications extend well beyond the labor market. The implications will impact the foundations of civic life, leadership, and human autonomy itself. The irony is that the more powerful our tools become, the more we depend on the quality of the minds using them.

Higher education cannot afford to forget that.