ChatGPT and other generative AI tools are no longer “new” news. However, the interest that they unleashed in AI from a user and producer perspective continues to accelerate. In the early days of ChatGPT, I could cover many of the latest AI advances with a monthly article. That’s impossible now.

It’s hard not to write about AI, particularly when applications like ChatGPT are accessible to many. Depending on your level of education and experience, they can also be easy to use.

Last week, I received an email from McKinsey & Co. with a link to their 100 articles published since the public release of ChatGPT-3.

I don’t have the personnel and resources comparable to McKinsey. However, a quick review of my blog shows that I’ve been reviewing books about AI and writing articles about AI for the past 10 years. In a February 2020 article, I posed the question Artificial Intelligence is Everywhere – Will You Be Prepared?

In an August 2022 review of an open issue of Daedalus titled AI & Society, I wrote that the topic On the Economy and the Future of Work interested me the most. I was intrigued by economistMichael Spence’s chapter titled Automation, Augmentation, Value Creation & the Distribution of Income & Wealth.

In this overview of the news about AI that I found interesting since my last review, none of the articles or books are as long as the 372-page Daedalus issue. Even the U.S. Senate Working Group on AI issued a short 31-page report.

Hope you enjoy the updates, and undoubtedly, more will be sure to come.

AI Books

Author Salman Khan appeared on MSNBC for an interview about his new book, Brave New Words: How AI Will Revolutionize Education (And Why That’s a Good Thing). Khan shows there are scalable technologies that, used appropriately, can make a big difference for learners, particularly when connected with teachers.

I haven’t read Khan’s new book, but his Khan Academy work has enhanced learning for millions of students over the years. I expect his ideas about using AI will be useful.

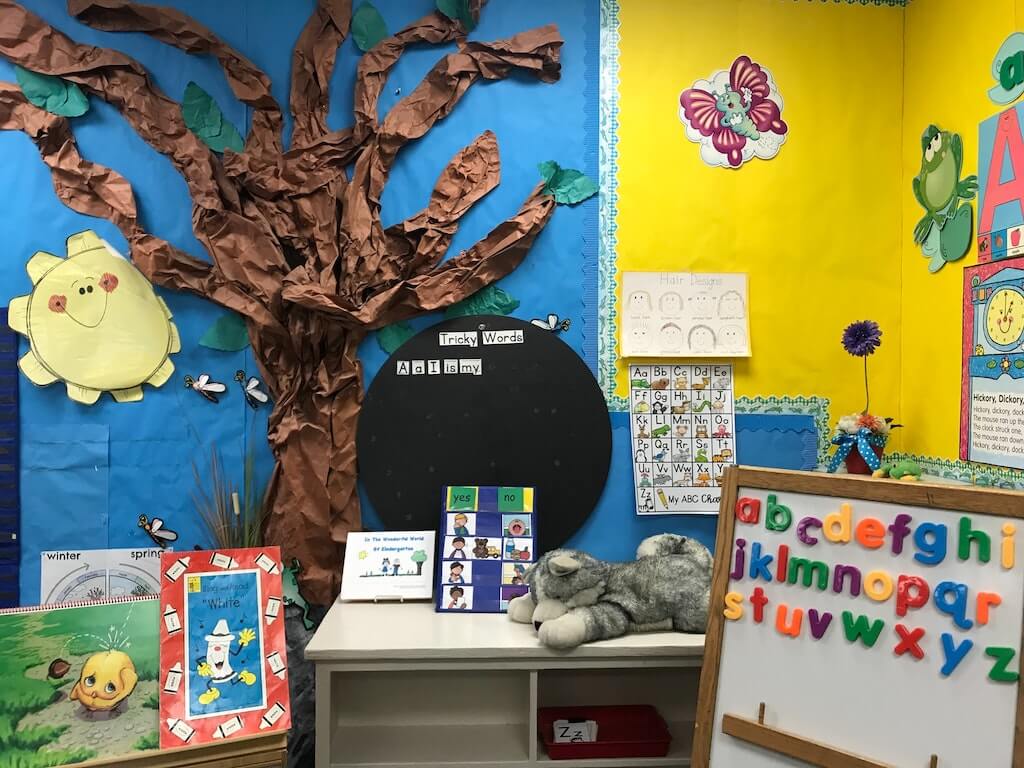

AI in Teaching

Rand recently released the results of a survey of K-12 teachers in public schools. The purpose of the survey was to determine the percentage of teachers using AI tools in the classroom and how they use these tools.

I was pleasantly surprised to learn that 18% of teachers reported using AI tools in their teaching and that an additional 15% have tried them. It was not surprising that the wealthiest school districts have a much higher percentage of teachers who report using AI tools in their teaching than the less funded districts.

Notably, the Rand survey differs from the result of a Pew Research Center paper. This publication indicates that 25% of U.S. K-12 teachers claim that AI does more harm than good. The same survey shows that 32% of teachers showed that AI provides an equal mix of good and harm. Another 35% are not sure.

High school teachers are more likely than elementary and middle school teachers to have negative views about AI. Using a different survey, Pew reported different results from students.

John Dolman’s blog article, AI Won’t Transform Education, surfaced an interesting premise. Dolman, an English teacher, is committed to teaching using AI tools. However, he states that the fundamental barrier to transforming education is the assessment system.

According to Dolman, students are still assessed based “on written, terminal assessments that focus on narrow ranges of knowledge and an even narrower skills base. We have built an education system around our assessments and have not designed assessments that truly show an individual’s unique capabilities.”

Dolman believes AI reveals the weakness of assessments and that nothing tested is truly unique. He also believes that AI has the power to transform assessments.

Canvas’ VP of Global Academic Strategy, Ryan Lufkin, provided an update on the company’s initiatives to add AI tools to its Canvas Learning Management System (LMS) through an interview on YouTube. It was not surprising to learn of the safeguards that the company had in place before these enhancements were included.

Google’s Notebook LM, powered by Gemini 1.5 Pro, added an AI Tutor feature to its capabilities. Josh Woodward provided a demo recorded and available on YouTube. The tutor combines materials added and provides an audio discussion for the student. The student can join the conversation with questions, triggering responses.

AI in Higher Education

The Middle States Commission on Higher Education hosted a webinar featuring a presentation titled Harnessing the Power of AI in Higher Education from Dr. Tricia Bertram-Gallant, Director of Academic Integrity & Triton Testing at theUniversity of California San Diego. Dr. Bertram-Gallant’s research focuses on academic integrity.

Dr. Bertram-Gallant believes that most instructors teach without considering ways that AI can be used to cheat. The International Center for Academic Integrity provides an Integrity Matters Blog to which Dr. Bertram-Gallant frequently contributes. Her new book, The Opposite of Cheating: Teaching for Integrity in the Age of GenAI, will be published next year.

There are many good ideas offered by Dr. Bertram-Gallant in this hour and a half webinar. If you have the time, I recommend watching it. Not all the time is a lecture. Dr. Bertram-Gallant frequently surveys the viewers, and that activity takes up time.

Developing and certifying knowledge and abilities is the social contract between higher education institutions and the public. Instructors must design fair and honest pedagogy and assessments. Students must fairly and honestly demonstrate learning. Instructors must fairly and honestly evaluate student learning that the institution certifies.

Generative AI can disrupt the process if students use it and instructors don’t adapt their instruction to maintain the integrity of their assessments. Dr. Bertram-Gallant states that it’s super easy for students to use GenAI tools like ChatGPT. Given these realities, we can use GenAI to empower teaching and learning.

Fast Company published an article by Tigran Sloyan titled “AI will revolutionize higher education (if universities will allow it).” The article’s premise is that universities need to partner with industries to ensure that the content taught provides students with the skills expected by industry.

AI Regulations

The U.S. Senate Working Group released a report titled Driving U.S. Innovation in Artificial Intelligence. The 31-page bipartisan report is subtitled A Roadmap for Artificial Intelligence Policy in the United States Senate.

Members of the group include Majority Leader Chuck Schumer and Senators Mike Rounds, Martin Heinrich, and Todd Young. The group’s first initiative was hosting three educational briefings on AI in the summer of 2023, including a classified briefing.

Addressing more specific policy domains, the AI Working Group hosted nine bipartisan AI Insight Forums in the fall of 2023. The topics for these forums included:

- Inaugural Forum

- Supporting U.S. Innovation in AI

- AI and the Workforce

- High-Impact Uses of AI

- Elections and Democracy

- Privacy and Liability

- Transparency, Explainability, Intellectual Property, and Copyright

- Safeguarding Against AI Risks

- National Security

- >Over 150 experts took part in the forums. The report includes a list of each participant in the appendix.

The Working Group encouraged the Senate Appropriations Committee to develop emergency appropriations language to fill the gap between current spending levels, and the National Security Commission on Artificial Intelligence (NSCAI) recommended spending with the following priorities:

- Funding for a cross-government AI research and development effort to include:

- Fundamental and applied science, such as biotechnology, advanced computing, robotics, and material science.

- Foundational trustworthy AI topics, such as transparency, explainability, privacy, interoperability, and security.

- Funding the outstanding CHIPS and Science Act accounts not yet funded, particularly those for AI, including:

- NSF Directorate for Technology, Innovation, and Partnerships

- DOE National Labs through the Advanced Scientific Computing Research Program in the DOE Office of Science

- DOE Microelectronics Programs

- NSF Education and Workforce Programs, including the Advanced Technical Education (ATE) Program

- Funding, as needed, for the DOC, DOE, NSF, and DOD to support semiconductor R&D. These funds are specific to the design and manufacturing of future generations of high-end AI chips. They support the goals of ensuring increased American leadership in cutting-edge AI through the co-design of AI software and hardware and developing new techniques for semiconductor fabrication that can be implemented domestically.

- Authorizing the National AI Research Resource (NAIRR) by passing the CREATE AI Act and funding it as part of the cross-government AI initiative. Additionally, expanding programs such as the NAIRR and the National AI Research Institutes to ensure all 50 states can participate in the AI research ecosystem.

- Funding a series of “AI Grand Challenge” programs drawing inspiration from and leveraging the success of similar programs run by the DARPA, DOE, NSF, NIH, and others like the private sector XPRIZE. The focus will be on technical innovation challenges in applications of AI that would transform the process of science, engineering, or medicine.

- Funding for AI efforts at NIST, including AI testing and evaluation infrastructure and the U.S. AI Safety Institute. Additionally, funding for NIST’s construction account to address years of backlog in maintaining NIST’s physical infrastructure.

- This funding will allow the Bureau of Industry and Security (BIS) to update its IT infrastructure and buy modern data analytics software. The goal is to ensure that BIS has the personnel and capabilities for prompt, effective action and enhanced interagency support for BIS’s monitoring efforts to ensure compliance with export control regulations.

- Funding R&D activities and developing appropriate policies at the intersection of AI and robotics. The goal is to advance national security, workplace safety, industrial efficiency, economic productivity, and competitiveness through a coordinated interagency initiative.

- \Supporting a NIST and DOE testbed to identify, test, and synthesize new materials to support advanced manufacturing. The test bed would use AI autonomous laboratories and AI integration with other emerging technologies, such as quantum computing.

- Providing local election assistance funding to support AI readiness and cybersecurity through the Help America Vote Act (HAVA) Election Security grants.

- Providing funding and strategic direction to modernize the federal government and improve the delivery of government services. These funds would include activities such as updating IT infrastructure to use modern data science and AI technologies and deploying new technologies to find inefficiencies in the U.S. code, federal rules, and procurement programs.

- Supporting R&D and interagency coordination around the intersection of AI and critical infrastructure, including smart cities and intelligent transportation system technologies.

The AI Working Group also encouraged the “relevant” committees to develop legislation to leverage public-private partnerships across the federal government. The legislation would support AI advancements and minimize potential risks from AI.

The impact of AI on the Workforce was also addressed by the Working Group. The Working Group encouraged the development of legislation related to training, retraining, and upskilling the private sector workforce to participate in an AI-enabled economy.

They recognized the promise of the federal government’s adoption of AI to improve government service delivery and modernize internal governance. Such measures would also upskill existing federal employees to maximize the beneficial use of AI.

The ability of AI to influence elections was recognized, and the group acknowledged the work of the U.S. Election Assistance Commission (EAC) and the Cybersecurity and Infrastructure Security Agency (CISA) for its work to protect elections.

Issues related to privacy and liability, as well as transparency, explainability, intellectual property, and copyright, were discussed. The Working Group encouraged the relevant committees to consider legislation to further define and refine legislation related to the impact that AI may have in these areas.

The paper discussed safeguarding against AI risks. The Working Group encouraged companies to perform detailed testing and evaluation to understand the landscape of potential harms. However, this did not go as far as the European Union’s new AI Act.

Issues such as national security and the level of uncertainty related to general-purpose AI systems achieving AGI were discussed. Again, the relevant committees were encouraged to consider these issues in the future.

After reading the report (which I encourage many of you to do), I believe the term “Roadmap” is a misnomer in the report. The report is thorough in its listing of almost every issue related to AI. It is not a roadmap in the sense that it does not direct Senate committees to a recommended pathway over the next few years.

It will be important to watch the various bills that will be proposed in the Senate as an outcome of this roadmap. I expect most of them will surface through appropriations requests. Given the controversy over its passage, it appears at this time that the bipartisan group does not have the determination to draft a bill as specific as theEU AI Act.

Chat GPT and LLM News

OpenAI released a new AI model, GPT-4o. The “o” stands for omni.Ethan Mollick\ provided the most informative overview of the release in his Substack column, “One Useful Thing.” Mollick wrote that there are three major implications of this release.

-

- Education: GPT-4 is a powerful tutor and teaching tool. “With universal free access, the educational value of AI skyrockets, and that doesn’t count voice and vision.” The homework apocalypse will reach its final stages. Cheating will become ubiquitous, as will high-end tutoring.

- Work: With free access to GPT-4o, employees can begin building GPTs without outside permission. Mollick believes entire departments will be filled with GPTs that automate work, and employees will not tell their companies. Getting employees to share that information will be a challenge.

- Global entrepreneurship: GPT-4o will be available globally. Everyone will write in perfect English, will write computer code, and get help with problems. Entrepreneurs will get advice to improve their businesses regardless of their location.

AI Productivity Tools

Metropolitan State University at Denver issued a Prompt Engineering Guide for creating and using prompts for generative AI. The guide is still a work in progress, but its creators attempted to include guidance for novices and experienced generative AI users.

EduWriter.ai announced the launch of its platform offering writing tools and educational resources. I checked it out briefly using its free model (there is a premium model) and was impressed with the 295-word paper including legitimate sources that it produced in less than a minute. I plan to keep an eye on this platform in the future.

AI Research

Two AI research projects atStanford University received National Science Foundation (NSF) grants as part of a pilot program to democratize AI research.

A team from the Stanford Intelligent and Interactive Autonomous Systems Group (ILIAD) submitted a proposal to continue work in the domain of human-robot and human-AI interactions. The project focuses on learning effective reward functions for robotics using large datasets and human feedback.

Stanford’s School of Medicine’s Clinical Excellence Research Center (CERC)’s project seeks to develop computer vision models that can collect and analyze comprehensive video data from ICU patient rooms to help doctors and nurses better track patients’ health.

AI Webinars and Conferences

The GAIL Conference, Generative AI in Libraries, will be held virtually on June 11, 12, and 13 from 1 to 4 p.m. Eastern time. This year’s theme isPrompt or Perish: Navigating Generative AI in Libraries. Given that this is a virtual conference, I was surprised to see that registration is full as of May 6.

Future of Work with AI

The University of Wisconsin at Milwaukee announced it will house the nation’s first manufacturing-focused AI Co-Innovation Lab\. The lab will connect Wisconsin manufacturers and other companies with Microsoft’s AI experts and developers. Their goal is to serve 270 Wisconsin companies by 2030.

McKinsey’s talent leaders published a podcast titled Gen AI talent: Your next flight risk. The premise is that workers skilled in generative AI are more likely to quit than other workers. Those who self-identify as creators and heavy users are at the highest risk. The issue is more about making those employees feel part of a supportive community than it is about pay.

The McKinsey partners were surprised at the high number of non-users of Generative AI in companies surveyed. They recommend companies upskill and broaden access to gen-AI tools and use cases. Moreover, they state that it doesn’t seem sustainable for there to be such a small group of heavy users and creators.

Final Thoughts

As I have pointed out in previous articles, artificial intelligence is not new. The term first surfaced in the 1950s.

Generative AI is not the only type of artificial intelligence. Still, its open access to the masses has stimulated more product development and discussions about its implications for education and the workforce than any other form of AI.

Of all the items I covered in this update, the ChatGPT-4o release and the Senate bipartisan report may be the two most significant. Even though the term “roadmap” may be a misnomer, I’ll be looking for future reports from the U.S. Senate and its committees regarding AI.

It is nearly impossible to keep up with the latest news about AI, AI productivity tools, and releases of AI-enabled software. Whenever news becomes this widespread, I usually decide to focus on a specific area. In this case, I intend to focus on teaching and upskilling (two areas).

If I write about something else, it will probably be important or an exception.